Overview

Following the Week 10 prototype presentation and feedback, Week 11 shifted focus toward exploring one of the project's core pillars: Embodied Interaction. While previous weeks concentrated on visualizing noise through static artifacts and data representations, this week experimented with interactive systems that respond to human presence and gesture.

The goal was to move beyond passive visualization toward active engagement, testing whether embodied interaction could create more immersive and meaningful encounters with noise as a material. However, this experimental direction also surfaced critical questions about how interaction relates to the project's core theme of noise pollution.

Shifting to Embodied Interaction

From Static Visualization to Interactive Systems

Up until this point, the project had focused primarily on creating artifacts that visualize noise: noise maps, photographic composites, and audio-visual representations. While these approaches successfully demonstrated that noise could be transformed into aesthetic forms, they remained fundamentally static. Viewers could observe the visualizations but not actively participate in shaping them.

Embodied interaction offered a different paradigm: rather than presenting noise as finished compositions, interactive systems could allow audiences to explore, manipulate, and experience noise through their own bodies and movements. This shift aligned with the project pillar of creating embodied, sensory experiences rather than purely visual ones.

Why Embodied Interaction Matters

The rationale for exploring embodied interaction emerged from several considerations:

- Engagement: Interactive systems invite deeper, longer-duration engagement than static displays

- Understanding through Doing: Physical interaction can create intuitive understanding that visual observation alone cannot achieve

- Personalization: Each viewer's interaction creates a unique experience, making the work feel personally relevant

- Playfulness: Interaction introduces an element of play and experimentation that can make complex concepts more accessible

The challenge was to develop interactive prototypes that demonstrated these principles while remaining technically feasible within the available time and skill level.

Experiment 1: Hand Tracking Point Cloud Manipulation

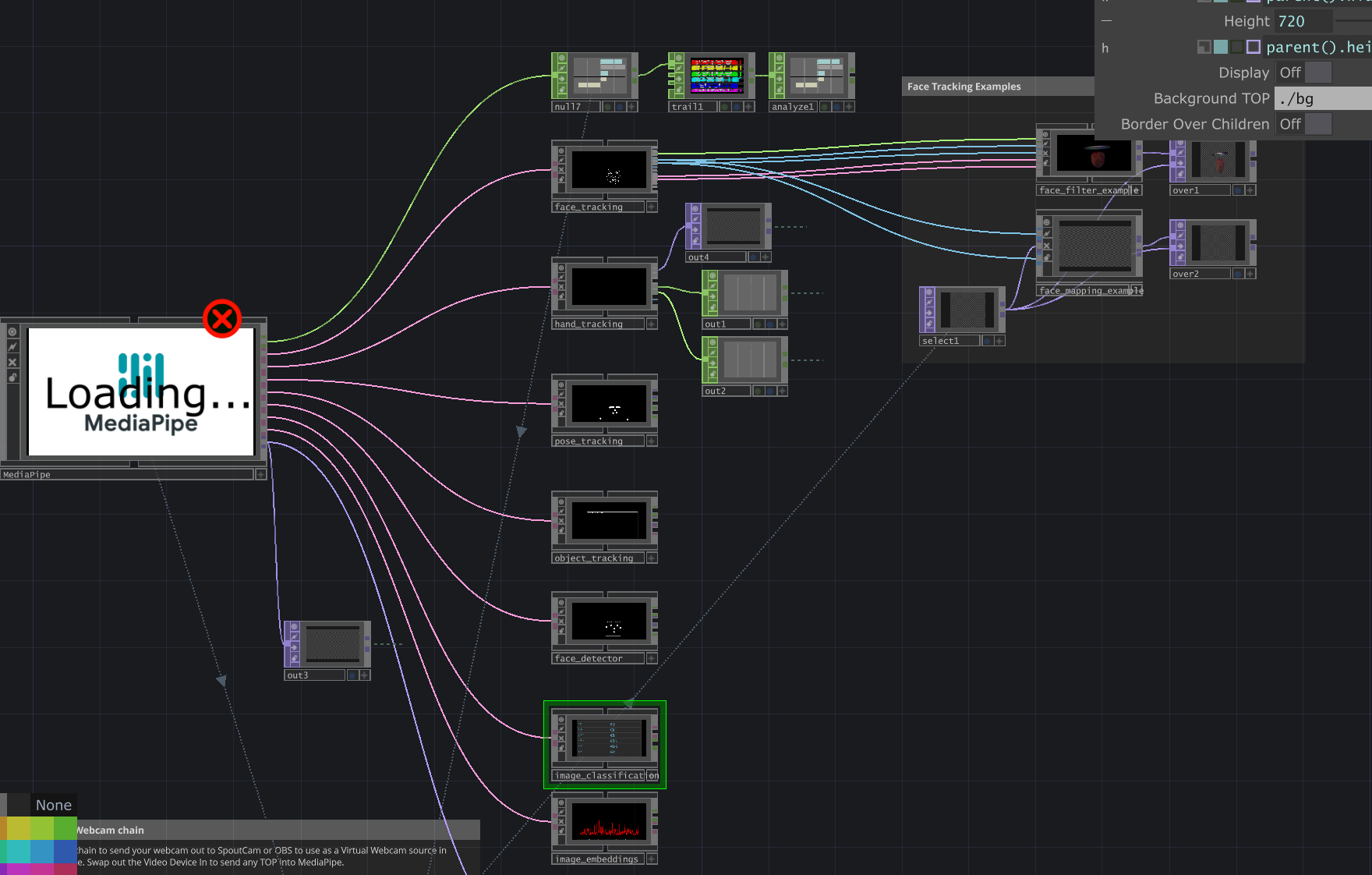

Technical Approach: MediaPipe Integration

The first experiment utilized MediaPipe, a plugin for TouchDesigner that enables motion capture functionality through computer vision. Specifically, I implemented MediaPipe's hand tracking capabilities to create a system where hand gestures could manipulate a point cloud visualization in real-time.

The technical setup involved:

- Camera input feeding into MediaPipe's hand detection algorithm

- Extraction of hand landmark positions (fingertips, palm center, wrist) in 3D space

- Mapping hand position and gesture data to point cloud transformation parameters

- Real-time rendering of the dynamically adjusting point cloud based on hand movement

Interaction Design

The interaction model allowed users to:

- Move their hand to control the position and flow of the point cloud

- Use gestures to adjust density, spread, and movement patterns

- Create fluid, organic shapes through natural hand movements

- Experience immediate visual feedback that responded to subtle changes in hand position

The resulting experience felt intuitive and playful. Users could "sculpt" the point cloud through gesture, creating a sense of direct manipulation that static visualizations could not provide.

Hand Tracking Experiment: Visual Documentation

Process Videos

The following videos demonstrate the hand tracking system in action, showing how hand gestures translate into point cloud transformations in real-time.

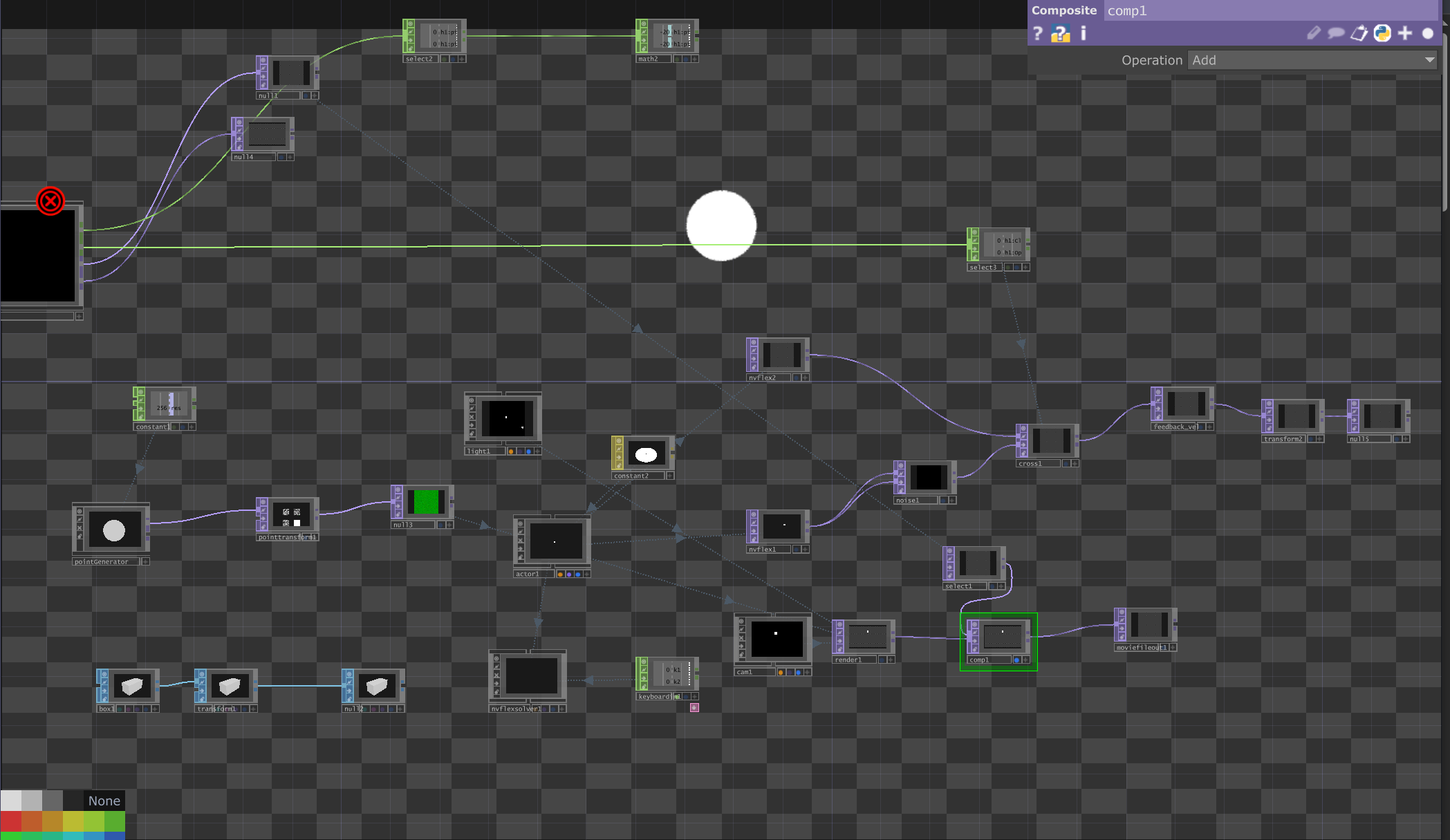

Experiment 2: Depth-Based Human Form Point Cloud

Technical Approach: Depth Sensing

The second experiment took a different approach to embodied interaction by using depth camera technology to create a point cloud representation of the human body itself. Unlike the hand tracking experiment, which used gestures to manipulate an abstract point cloud, this system transformed the viewer's entire body into a living point cloud visualization.

The technical implementation involved:

- Camera-based depth sensing to measure the distance of objects from the camera

- Point cloud generation where each pixel's depth value determines its 3D position

- Real-time filtering to isolate human forms from the background

- Rendering the depth data as a dynamic point cloud that mirrors the viewer's movements

Embodying the Visualization

This approach created a fundamentally different kind of embodied experience. Rather than manipulating an external object, viewers became the visualization itself. Their body, movements, and presence were directly translated into visual form. This created several interesting effects:

- Immediate feedback loop between movement and visual response

- Dissolution of the boundary between viewer and artwork

- Playful exploration as viewers tested how different movements affected the visualization

- Aesthetic transformation of the familiar (human body) into the abstract (point cloud)

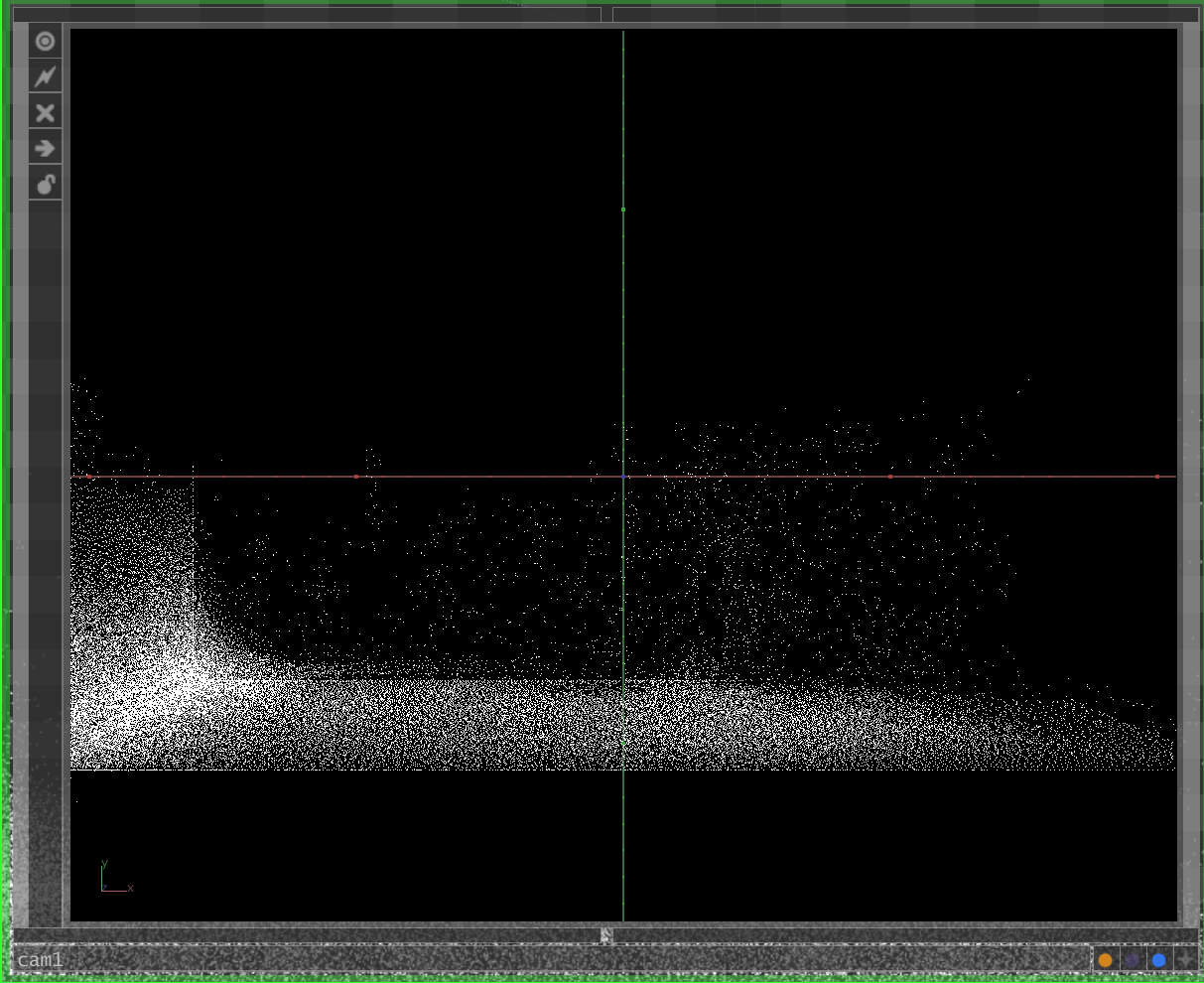

Depth-Based Point Cloud: Visual Documentation

Process Videos

These videos document the depth-based point cloud filter in action, demonstrating how human presence and movement are captured and transformed into dynamic point cloud representations.

Faculty Feedback: The Missing Link to Noise

The Core Critique

The professor's feedback was direct and crucial: "Embodied interaction alone does not show noise."

While acknowledging the technical competence and aesthetic appeal of the interactive prototypes, the professor pointed out a fundamental disconnect. The experiments successfully demonstrated embodied interaction as a principle, but they failed to connect meaningfully to the project's central theme: noise pollution and its transformation into aesthetic experience.

The point cloud manipulations were engaging as interactive experiences, but they could represent anything. There was nothing specifically about noise in the interaction model, the visual output, or the conceptual framing. The work had drifted from being about noise to being about interaction for its own sake.

Recognizing the Gap

This feedback resonated immediately because it was correct. The experiments were created to demonstrate embodied interaction as a technical capability rather than to explore noise as a subject. While the original intention was eventually to combine embodied interaction with noise visualization, the current prototypes existed in isolation from the project's core concerns.

The professor's critique revealed several issues:

- Conceptual Drift: The focus had shifted from "noise" to "interaction," losing the thematic anchor

- Technical Limitations: My current skill level made it difficult to meaningfully integrate embodied interaction with noise data and visualization

- Premature Complexity: Adding interaction before establishing a strong visual language for noise created confusion rather than depth

- Missing Message: Without clear connection to noise, the work could not communicate its intended message about transforming urban soundscapes

Strategic Decision: Return to Core Theme

Following this feedback, I made a strategic decision to temporarily set aside embodied interaction and return focus to the project's foundation: visualizing noise in ways that communicate meaningful messages.

This decision was pragmatic rather than conceptual. Embodied interaction remains a valid and valuable pillar for the project, but integrating it successfully requires:

- Stronger technical skills in real-time systems and sensor integration

- Clearer conceptual framework for how interaction reveals or transforms noise

- Established visual language that interaction can build upon

- More development time to refine the interaction models

Rather than forcing embodied interaction into the project prematurely, the revised strategy prioritizes establishing strong foundations first:

- Near-term focus: Develop compelling noise visualization that clearly communicates the transformation from pollution to aesthetic resource

- Medium-term goal: Strengthen technical skills in interactive systems and sound-responsive programming

- Long-term integration: Return to embodied interaction once the core visual language is established and technical capability has improved

Revised Direction: Message Through Visualization

The professor recommended focusing on creating noise visualizations that effectively communicate a message. This approach prioritizes clarity, conceptual strength, and meaningful transformation of noise data into visual experience.

What This Means in Practice

Moving forward, the project development will concentrate on:

- Data Collection: Systematic recording and analysis of urban noise across diverse environments

- Visual Translation: Developing clear, consistent methods for translating noise characteristics into visual parameters

- Aesthetic Refinement: Creating visualizations that are beautiful, compelling, and invite sustained attention

- Conceptual Clarity: Ensuring that the transformation from noise to visualization communicates the core message: noise as aesthetic material rather than pollution

- Design Coherence: Addressing the Week 10 feedback by establishing unified visual language across all components

Lessons from the Detour

While the embodied interaction experiments did not directly advance the project's immediate goals, they were not wasted effort. The process revealed several important insights:

- The importance of maintaining clear connection to core themes even while exploring new directions

- The value of technical experimentation for building skills that will be useful later

- The need to balance ambition with current capabilities

- The power of direct, honest feedback in refocusing effort toward what matters most

Looking Ahead

Week 12 and beyond will focus on:

- Intensive noise data collection across Singapore's diverse urban zones

- Developing refined visualization techniques that clearly communicate noise characteristics

- Creating a unified design system that brings coherence to the various project components

- Testing how effectively the visualizations communicate their intended message to audiences

- Building a strong foundation that embodied interaction can eventually enhance

The detour into embodied interaction, while not immediately productive, clarified what the project needs most right now: a clear, compelling visual language for noise that communicates meaningful transformation. Everything else can build from that foundation.